Your Copilot's thought process

- Why does AI tend to hallucinate?

- How does AI create elaborate stories, and how could this impact users?

- Which AI chatbot is the most prone to hallucinations? And why?

Unfortunately, the term 'hallucinate' has entered the public sphere as a general interpretation for software errors from applications driven by large language models. Historically, software errors produced illegible error codes, blue screens and spontaneous system restarts. Applications driven by Large-language Models (LLMs), such as chatbots, present new challenges for service providers and consumers alike.

Generally available models like ChatGPT, Claude or Gemini are created by embedding large volumes of text. Think of embeddings as numerical coordinates in high dimensional space.

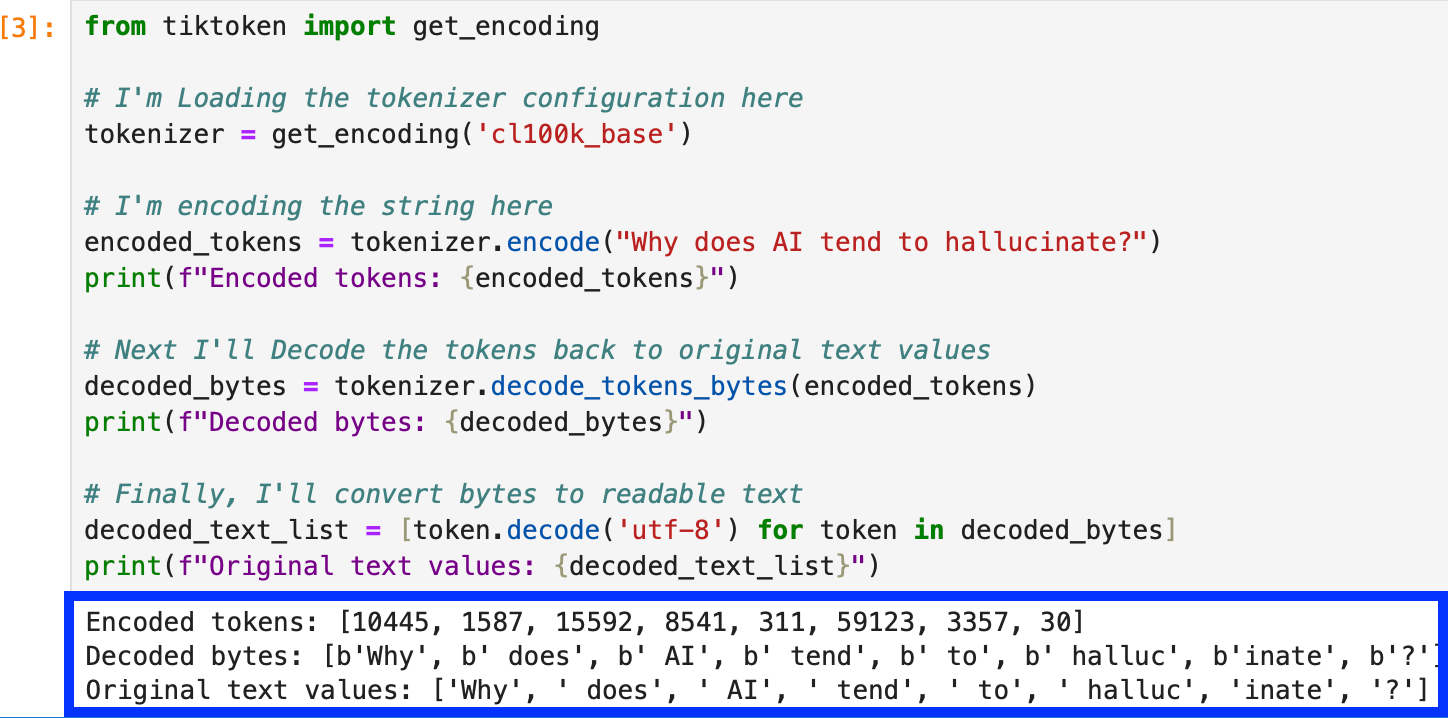

Check out this embedding example where we embed the first inquiry, "Why does AI tend to hallucinate?"

Notice how the words have been split?

Chatbots interpret words like humans experience a foreign language. It tries to interpret and respond, but there's a probability the response will be wrong; the probability is determined by the training and fine tuning. Using the bot like a search engine may return an error that incorrectly associates a user with a criminal act. Identifying the user in the training data used to create the model is one challenge - Assessing user harm impact, another. The impact to most of the population in the distribution is likely to be benign. We could see more serious outcomes for uneducated, or vulnerable outliers in the distribution.

It's highly likely you're not the only [Insert your name here] that's existed throughout history. Do not assume a response to an inquiry from a chatbot regarding [Insert your name here] refers to "You".

Chatbots and LLMs are becoming widespread and commoditized. Providers with large active user populations and publicly exposed applications will be tested and exploited beyond the terms of service, willing or unwilling.

There's no such thing as a 100% secure application and a lot of money has been made from insecure software. It is near certain, companies pressured by investors awaiting returns will take risks to realize cashflow and profit.

Executive risk awareness, consumer education and pragmatic policies are essential safeguards for responsible use.